21st Century Computing: A World of Technology Makes Your Home Computing Future A Virtual Reality

A typical morning in the year 2001: You wake up, scan the custom newspaper that's spilling from your fax, walk into the living room. There you speak to a giant screen on the wall, part of which instantly becomes a high-quality TV monitor. When you leave for work, you carry a smart wallet, a computer the size of a credit card. When you come home, you slip on special eyeglasses and stroll through a completely artificial world.

Incredible, but all very possible. "In the next 11 years, you'll see incredible breakthroughs in the home," says Robert Simon, director of Lotus West, the West Coast R & D center of Lotus Development, maker of 1-2-3.

Eleven years is an eon in computing. Take a look back to 1978. Apple was still a startup, VisiCalc didn't exist, and the average home computer huffed along bravely with 48K of RAM. The IBM PC was three years away; most people had never even heard of personal computers. By 2001, our computers of today will seem just as ancient.

To some extent, the future is always dreamland. We tend to imagine that glamorous technology will arrive sooner, cost less, and run better than it really does. MeanŁwhile, less-heralded advances steal in and become part of our lives. No one can fully predict the future, but we keep trying. Some of the surer bets:

DESKTOP LIBRARIES

"Storage will probably be 50 times what we now have, for the same price," says Tom Lafleur, director of engineering at Qualcomm, a San Diego, California, satellite communications firm. The secret is erasable optical disks.

These devices will hold vast amounts of data. The NeXT computer already offers an erasable optical disk that holds 656 megabytes of data. Optical disks will popularize desktop libraries, which in turn will alter our whole sense of computing. With instant referencing of thousands of volumes of information, computing will be like working with an army of electronic elves, all ready to fetch in a flash any tidbit you like.

"It'll also allow you to store audio and video," says Phillip Robinson, a computer consultant with Virtual Information of Sausalito, California. "You'll be able to capture segments of a show you like, cut them out, and put them in a video report for school. Look at the NeXT machine. I can see the equivalent of that for $1,000."

DISPLAY

High-definition TV (HDTV) offers exceptional resolution, as fine as a motion picture. It has 1125 lines, more than twice the current 525, and promises photorealistic images and stunning 3-D. The screen is rectangular, rather than square, so you see movies as they were filmed. HDTV will eventually accept digital as well as analog input.

Japan has pioneered this technology, which will almost certainly lead to HDTV computer screens. "Its impact is close to the year 2001," says Paul Saffo, an analyst at the Institute for the Future, a research firm in Menlo Park, California.

Others predict even higher-quality resolution. "The display will be 1500 lines, seamless, with 35-millimeter resolution," says Marty Perlmutter, partner at The Green Street Gang, a San Francisco multimedia firm. But even this forecast may fall short, as MIT's Media Lab is now experimenting with displays of 2000 lines.

|

PREPARING FOR 2001 Get a jump on the twenty first century today by exploring the avenues likely to lead there. For some innovations, like HDTV and voice recognition, you'll have to wait. But other essential building blocks for future computing are here today. Here are some guideposts on your road to the future. CD-ROM is appearing for a broad variety of computers. Several disks contain desktop libraries, hypertext encyclopedias, and video. If you plan to buy a CD-ROM drive, be aware that erasable optical disks will eventually preempt them. ISDN is some years away, but you can already investigate the remarkable world of online services. If you're a novice telecommunicator, you may want to start with Prodigy (run by IBM and Sears), which is available in several metropolitan areas, including New York. Los Angeles, Washington, Baltimore, Atlanta, and Detroit. The service should reach 42 percent of American households by the end of this year and go nationwide by the early 1990s. Prodigy is easy to use and offers a panoply of features: online shopping, news, advertising, stock purchasing, electronic mail. You could sign on to a local BBS, or even tap into one of the giant databases such as DIALOG. Desktop video software makes it easier to create full-motion graphics, an application that will grow in popularity as the power of home computers increases. The many animation programs already on the market provide excellent ground on which to learn the rules of moving pictures. Explore object-oriented programming without waiting for a NeXT computer by learning HyperCard or some other hypertext programming application. Plain-English programming languages and graphics-oriented programming languages are setting the stage for the personal software applications of the future. If you want to try the NeXT, visit a local Businessland computer store and see if it has one on display. The year 2001 is still a ways off, but you can make an effort to meet It on the road. |

COMMUNICATIONS

The completion of a nationwide Integrated Systems Digital Network will throw this field wide open. The data equivalent of the interstate highways. ISDN will simultaneously transmit voice, video, and computer data over existing phone wires. The first segment of ISDN is already in place: It shows the caller's number when the phone rings.

"It'll definitely replace the need to use modems," says Greg Simons, president of Primera Software in Berkeley, California. "The things we enjoy in an office where we hardwire computers, you'll be able to enjoy all around the world. You can have a voice-mail network all around the United States."

ISDN will make giant databases much more accessible. "If I'm going to Seattle and I wanted lo read the Seattle paper, I could do it now." says Simons. "Or if I wanted t see what's on TV there, I could see it right now."

|

THE NEW ELECTRONIC BRAINS Although once tagged as electronic brains, digital computers have never been very brainlike. But research in two areas—fuzzy logic and neural networks—holds out the tantalizing vision of a more human home computer. In 1965, Lofti Zadeh, a professor at UC Berkeley, invented fuzzy logic, a way of reasoning about ill-defined notions. It has since grown into an academic discipline with major implications for computers. Traditional logic analyzes the statement Bob is tall by setting a cutoff line, such as 5 feet 10 inches, and matching Bob's height against it. If he stands 5 feet 11 inches, he's tall; it he's 5 feet 9 inches, he's not tall. In real life, no cutoff line exists. Bob is "very tall," "somewhat tall." "a bit tall." Fuzzy logic captures such essentials by creating partial memberships in fuzzy sets. For example, it Bob were 7 feet 2 inches, he might receive a 1.0 membership in the set of tall people—that is, a full membership. If he were 6 feat 2 inches, he might have .80 membership, fairly complete. If he were 5 feet 6 inches, he might have .05 membership, very slight. Fuzzy logic excels at judgment calls; the world's best chess programs use it. It's reviving expert systems and currently runs an ultramodern Japanese subway, cement kilns, and robots. NASA is exploring its potential for controlling extravehicular space robots and the Mars Rover. Other scientists are approaching the brain more directly, attempting to mimic it with special machines called neural networks. Some have achieved startling results. Neural networks are composed of numerous identical chips, with a web of synapselike connections between them. As in the brain, these links grow stronger or weaker according to use. They store data as patterns of cell-to-cell connections, as the brain apparently does, and scientists often do not even know where particular items are. But it doesn't matter because data is accessed by content, not by specific address: You reach one memory by stimulating another one associated with it Neural networks can perform tricks of association impossible on digital computers. They can function even after partial destruction, though their performance dims. Finally, to the surprise of scientists, they appear to need periods of rest, where they "sleep" and even "dream." Neural network devices also improve their performance over time. One, called NETalk, learned how to read English prose aloud with 98-percent accuracy in only 16 hours and with no programming. Ultimately, these machines might perform such human feats as understanding and summarizing. Neither fuzzy computers nor neural networks have fully proven their potential, but research is moving apace. If they continue to shine, they may well ornament our desktops by the turn of the century. |

The potential for such hookups is obvious; the fallout, especially for non-computer industries, could be enormous, "Movies will probably be squirted into the home through the telecommunications lines and compressed into eight seconds on the erasable disk in your living room," says Perlmutter. "That'll wreak havoc with the corner video store."

THE MULTIMEDIA CENTER

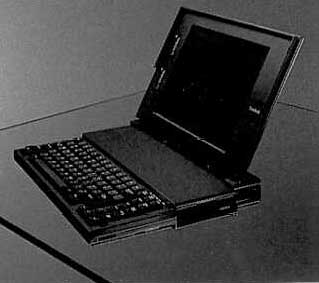

"Certainly, by the year 2001, we'll have an integrated home communications center," says Lee Felsenstein, inventor of the first portable computer, the Osborne 1, and president of Golemics in Berkeley, California. "That will be the home computer, combined with the ISDN telephone connection; the HDTV, which will be happening; the various information technologies ranging down to answering machines and fax; and general-information utility use."

"I'm frightened to use the term home computer, says Nat Goldhaber, a Silicon Valley venture capitalist who makes his headquarters in Berkeley, California. "The computer for the vast majority of people will disappear into this integrated telecommunications device that will be in the home."

Saffo agrees. "The personal computer as we know it will persist longer in the home than in business," he predicts. "But by 1996–1997, they'll start to disappear. They'll become a low-end commodity like the typewriter."

However, Saffo notes that a unified TV, stereo, and computer system will initially be only superficial, a matter of unified control. "Deep, true multimedia is where the computer knows everything that's on the screen. We'll be lucky to get that kind of depth by the year 2001."

MULTITASKING

By the twenty-first tasking will be everywhere. "A system will be absurdly obsolete without multitasking," says Robinson, "because the computer will be hooked up to a phone line that'll be delivering video images and fax information." It will be like having a pocketful of machines in a single device. Imagine your computer playing an aria in the background as you write, search an online database, or blast space blobs.

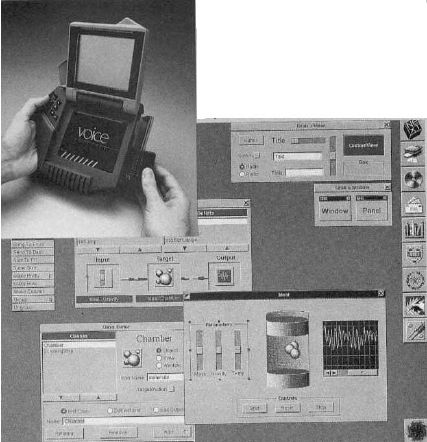

VOICE RECOGNITION

The ultimate input tool, voice recognition could bring computers to almost every level of society. Many observers see it as inevitable by the year 2001. "You'll talk to your TV set, and it'll customize itself and pull things off the air in the categories you told it," says Perlmutter, "‘Give me everything on Madonna, everything on Dan Quayle.’ It'll look for that and grab it from the 2000 channels it's scanning."

Greg Simons of Primera goes even further. "You'll have the most powerful Sun computer for a thousand bucks retail, and that computing power will be used to give a better interface so [anyone] could use it," he says. "[You] could talk to it, and there'd be a huge flat-panel screen on the wall. It'd be just an appliance, a looking glass into a whole sea of databases, libraries, entertainment services, newspapers, and TV." Such a machine, he adds, could even recognize body input, such as waving hands or swinging a bat.

INTERACTIVITY

The fusion of computer, optical disk, and HDTV will produce dazzling interactive entertainment. "Instead of watching a movie about the Oregon Trail," Saffo says, "the kids will be able to play the role of a character."

Joel Pitt, senior software designer at JWP Information Systems in Old Bridge, New Jersey, suggests that old movies could be turned into the cinematic equivalent of adventure games. "I'm sure in some way you'll be able to redo Casablanca," he says, "so at the end you could have Colonel Strasser shoot Bogart. Or hold on to Ingrid Bergman."

REMOTE CONTROL

"Remote control is a big item," Saffo says. "We'll see it on everything that should have it and on a lot that doesn't need it at all." By 2001, computers should vaporize annoying VCR controls. "The VCR is much more difficult to use than it would be if a computer were controlling it," says Lotus's Robert Simon. "For instance, you could tell it to record all episodes of a particular series, rather than your preprogramming it." You could also store the shows on optical disk for direct random access, without rewinding.

INTERFACES

"Software will get more and more humance," says Pitt. "It'll be easier to access, more obvious to the user, more fluent in terms of its abilities to respond to the coarse level of communication which humans are used to." Icon-based interfaces will be everywhere, and hypermedia tools like HyperCard should be more common and simpler to use.

EXPERT SYSTEMS

Expert systems were once considered the golden chariot into the future, but they've been plagued by surprising problems. For example, it's difficult to have a software application make a judgment call without a huge base of knowledge. That takes a lot of software, and a lot of money. But some observers predict a revival.

"I think expert systems will be woven into programs like those that access databases," says Primera's Simons. "Like HyperCard with real brains. The classic Alan Kay example is a system that's your buddy, your link to all this data, and it assembles a newspaper for you every day."

For example, such a system could note that when you read the paper you skip the local murders and astrology column, but always go to page 12 for over-the-counter stocks. With that knowledge, it would create a one-page summary of just the news you want every morning. It could also cast its net beyond any one publication, scanning the financial sections of all major papers, selecting the most interesting stories, and serving them up.

"They'll come into the home on something like a fax machine," Saffo says of customized newspapers. He also suggests that expert systems may appear in household appliances. "Servicing appliances is a problem, and I think they'll be increasingly designed with replaceable modules," he says. "We'll see onboard diagnostics, where a consumer can have the washer self-diagnose and indicate which black box to pull out and replace."

|

THE PARALLEL PROMISE "Everything that happens at the high end is a harbinger of things that come to the desktop," says Phillip Robinson, computer consultant with Virtual Information of Sausalito, California. Right now, the most significant news at the high end is the advent of a long-awaited architecture: parallel processing. Conventional computers work serially, sending one chunk of data at a time through a single chip. The most obvious route to more power is to place several processors in harness. Already appearing on supercomputers, parallel architecture may well reach the home by 2001. Until recently, parallel processing has been snarled in the problem of synchronizing the chips. "Critics say, ‘If you had to plow a field, would you rather do it with two oxen or a thousand bunnies?’" says Justin Rattner, director of technology al Intel Scientific Computers in Portland, Oregon. "The trick with a thousand bunnies is getting them all to hop at the same time." "It takes a lot of software intelligence to know how to split a job up into parts that multiple processors can [perform], says Robinson, "particularly when the results of a second calculation depend on the first." But software designers are surmounting this obstacle, and observers say parallel processing will soon break into general acceptance at the high end. From there it could be a fairly straight ride to controlling the family's giant flat screen with a slew of processors. "There will be a lot of information flowing into the home," says Andy Halford, director of software development at Alliant Computer Systems of Littleton, Massachusetts. "You'll be able to get video pictures in windows on your PC, and that might be games for kids, stock-market returns for the investor, video shopping. Travel agents would be able to show you a city. All that requires tremendous computer power, and the only way to achieve that is through parallelism." |

HOME CONTROL

"I think, by the year 2001, in some way or another, computers will be at the center of household control," says Pitt of JWP. Already they're regulating home heating and lights. And with the right mechanisms, says Pitt, your refrigerator could register the food ii contains."

"I think there's going to be a million tiny computers controlling everything in the home that's now controlled mechanically," says John Golini, electronics consultant at Jay Gee Programming in Los Gatos, California. Door locks will be microcontrolled from a keypad; computers could also regulate cosmetic mirrors, changing the amount of magnification and light.

PORTABILITY

Instead of the notebooks we carried to school, our kids will be carrying computer note-books. And instead of keyboards, students will use electronic pens and special tablets to jot down their lecture notes. Qualcomm's Lafleur expects we'll have wallet-size computers by the year 2001. "Look at the average wallet," he says. "A dozen credit cards and notes and car insurance information. I'd want something the size of or smaller than a wallet, and all that information available to me. You could call it a smart wallet."

|

FUTUTRE SPEAK

If you want to move into the future, you've got lo talk that talk. Here's a few terms to loosen your tongue: Compact Disc-Read Only Memory (CD-ROM)—Compact discs that store hundreds of megabytes of data and can't be written to or erased. expert systems—Customized computer systems that recognize and retrieve information based on the user's own preferences and a preprogrammed base of knowledge. fuzzy logic—A system of logic that gives tangible parameters to normally fluctuating values and judgments. high-definition TV (HDTV)—A motion-picture-quality television that boasts 1125 lines (instead of the conventional 525 lines) and a rectangular (not a square) screen. Integrated Systems Digital Network (ISDN)—A nationwide network that will use existing telephone lines to transmit voice, video, and computer data. multimedia—The integrating of audio, video, graphics, and communication technologies within a computer system. multitasking—The ability to perform more than one function at a time. neural networks—A series of identical chips with synapseike connections, similar to the brain, that strengthen or weaken according to use. object-oriented programming (OOP)—A method of programming in which blocks of code are represented by icons and can be manipulated to create applications. parallel processing—A method of computing in which multiple processors are assigned separate, interdependent pieces of a larger computing task. virtual reality—An artificial world of experience created through use of computerized devices and controlled simulations. voice recognition—A computer input method through which computing systems and electronic appliances are activated or controlled by voice or audio commands. —Jeff Sloan |

|

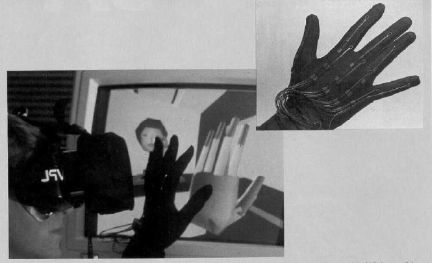

BRAVE NEW WORLDS

Virtual Reality: the creation of artificial worlds of experience. VR devices place you inside a controlled hallucination—the ultimate simulation. "We've designed computerized clothing you wear over each of the sense organs," says Jaron Lanier, CEO of VPL Research in Redwood City, California. For example, EyePhones is a heavy pair of glasses, rather like a scuba mask. Put them on and find yourself transported to a new 3-D environment. Don a DataGlove, extend your hand, wiggle two fingers, and you can walk through that scene. If you want, reach down and pick up a virtual object. Beyond entertainment, virtual reality could serve a variety of other uses, such as neuromotor training. "My favorite example is juggling," Lanier says. "You can make the balls move slowly at first, then speed them up as you get better. In education, you could pick up molecules and turn them around in your hand. If you want to shop from home, you could try out new houses, new cars." Lanier is currently working with Autodesk of Sausalito, California, maker of computer-aided design programs, by using the glasses and glove to "walk" through AutoCAD files as if they were actual buildings. Virtual reality has social aspects, too. "It's kind of like a costume party," Lanier says. "You can choose your own appearance and create shared worlds with other people. I see it as a social medium over the telephone, where people will have collective parties In virtual reality." Author Stewart Brand notes that today's movie theaters provide a kind of immersion in a virtual reality, and people go to them partly for that experience. "But [VR] is as much of an immersion as you can get without piping into your nervous system," he says. The experience is so compelling that the threat of virtual addiction could itself become a reality. Currently, the glasses and glove are very costly, though VPL Research has licensed the technology to Mattel for a low-level product called PowerGlove, which will act as a controller for Nintendo games. But Lannier expects VR to be common consumer technology in the next decade. |

OUTPUT

Declining prices will make laser printers a familiar feature in the home; dot-matrix printers will slip into oblivion. CD-ROM and other computer optical discs will equal audio compact disks in sound quality. But future computer output may be even more sophisticated than printing or sound.

Primera's Simons suggests an output device that resembles a pair of glasses that can be slipped on any where for instant access to information.

We can expect to see better scanners, faster chips, more special purpose chips, optical wiring, software that encompasses several applications under one roof (the integrated packages we use today are the fore-runner;), hypertext encyclopedias, and an array of innovations far beyond the power of prognostication.

The future, as The Amazing Kriswell informed us in Plan Nine from Outer Space, is where we'll spend the rest of our lives. If our experts are right, it should be a remarkable sojourn.

Paul Freiberger is coauthor of Fire in the Valley, one of the first books to detail the history of personal computing. Dan McNeill has written several books and articles on the development of personal computers.